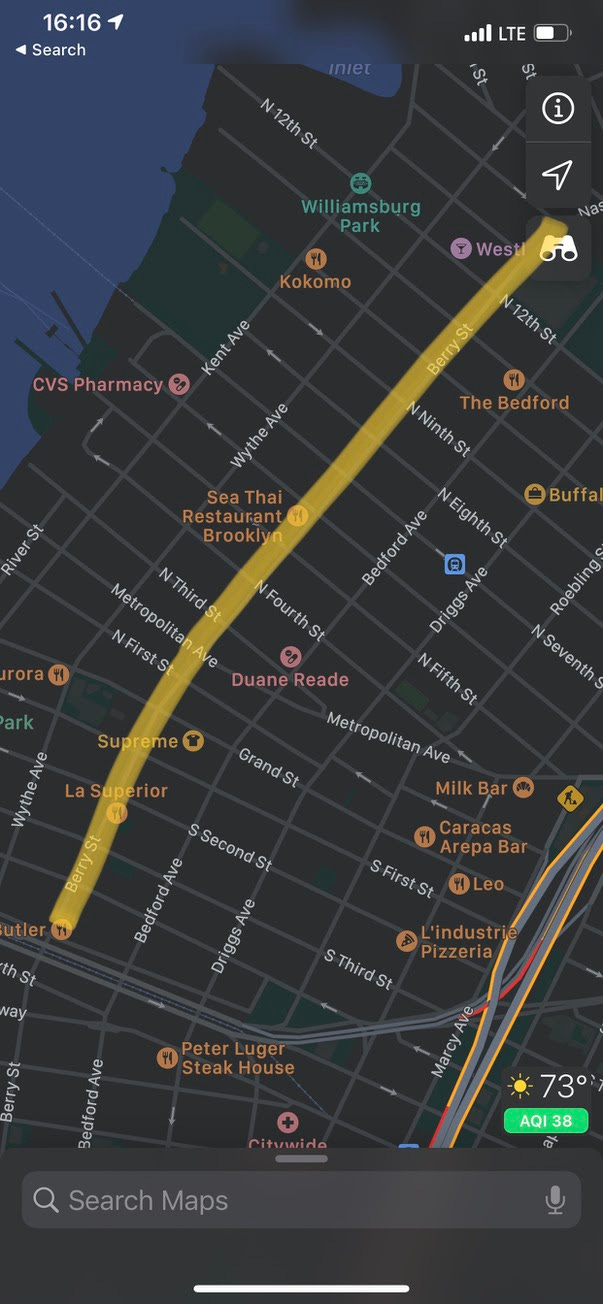

I live in Williamsburg, a neighborhood in Brooklyn, NY. Near my apartment, the city blocked off a mile-long stretch of Berry St. for its Open Streets Program. I’ve highlighted the stretch that’s blocked off here:

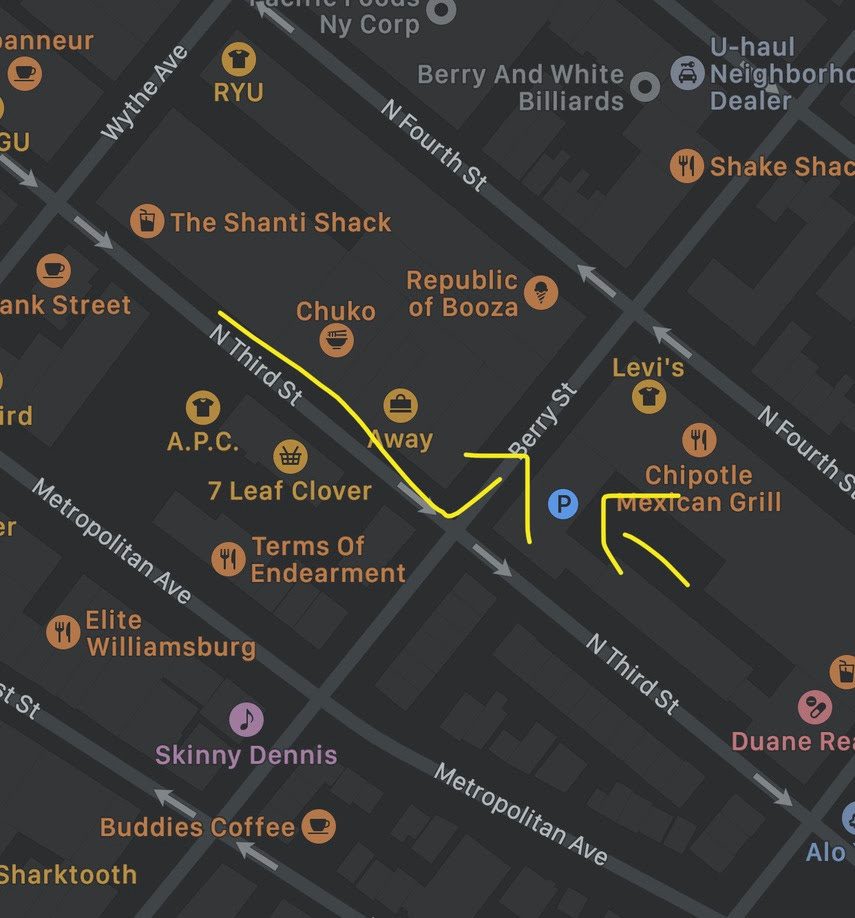

On Berry between North 3rd and 4th, there is a parking lot for Whole Foods. If you’re approaching from North 3rd, you’d take a left on Berry and then enter the parking lot on your right. I’ve highlighted the turn and the location of the parking lot here:

The catch is, while you’re making your turn, you’ll see metal barricades like this blocking your way:

The question is: what do you do now?

The right answer is that you can drive around the barrier (and move it if you have to) in order to get to the parking lot on that block. The reason is that you’re not “through traffic” -- you’re parking on that block, and not driving through. When you leave, you’ll make the first turn off of Berry and navigate back home using other streets.

Now, this is not a particularly hard problem. The logical progression goes something like this:

This street is blocked for the Open Streets Program

The intention of this program is to limit vehicular traffic so that people can enjoy the street. It is not to prevent any vehicular access at all (as it would be if, say, there were a crane or heavy construction happening on the block)

There are cars parked on this block

Because of (2) and (3), it is likely that this street is only blocked to “through traffic”

I am ending my trip on this block

Because of (5), I am not “through traffic”

Because of (4) and (6), I am permitted to go around the barrier

I see people thinking through this exact problem several times a week as I pass this intersection and others like it on Berry; invariably, they come to the same conclusion and move the barrier so they can park on the block. People find this sort of deduction natural, if not always effortless.

Can computers do this? Yes and no; they certainly can solve the logic problem if you lay out the facts and relationships. In fact, languages like Prolog are designed to do just that. But can a computer lay out the facts and relationships in the first place, and then solve the logical problem? No, not today.

In the statistical world, people sometimes distinguish between different problem domains, called M-closed and M-open. In the M-closed world, the “true model” -- the correct statistical description of how the world works -- is one of the models you’re considering. In the M-open world, the true model is so complex that it’s unknowable for all intents and purposes.

Elsewhere, in systems theory, closed systems are completely isolated from the rest of the environment, whereas open systems interact with the outside world.

I think both of these definitions permit an analogue to AI. We can define “closed” systems as systems where all the contexts and possible decisions are enumerable and finite. A computer vision system might have a limited set of objects that it is able to detect in an image. A machine translation system may have a dictionary for each language that it recognizes. A self-driving system may have a repertoire of actions it can take in response to a finite set of contexts. In closed systems, both the inputs and outputs are constrained. They may be constrained at mind-bogglingly large limits, like GPT-3’s capacity to generate text, but they are still constrained.

An “open” system is where the inputs and outputs are unbounded. Open systems require the application of first principles logic to novel scenarios and contexts.

Almost all of AI today is a form of pattern recognition. AI turns traditional programming on its head, as this somewhat famous diagram illustrates:

Almost every single AI system relies on vast quantities of training pairs of inputs and desired results. Eg., when you solve these reCAPTCHAs, you are supplying labels to training data:

But, no matter how many things they can recognize, the set of things an AI system can recognize is still bounded by the training data it is given. This is why even the field’s foremost practitioners of AI are circumspect about its capabilities:

And more skeptical folks would relabel the “intelligence” or “learning” in AI/ML to simply “pattern recognition”

Meanwhile, much of what we think of as intelligence, and take for granted in our day to day existence, isn’t pattern recognition at all. It’s reasoning from first principles.

It’s hard to imagine how one would train a computer to recognize and reason through the example I gave above, without first supplying a large set of suitably similar examples, or by explicitly hard coding specific rules just for this problem, and then again, one at a time, for all the other problems the computer may face.

This is why I’m a bit skeptical of self-driving cars. Not because the engineering problems are out of reach for the technology that exists today. Given enough training data, I’m sure that the sensors, computer vision systems, and robotics that we have today could be assembled into a system that could handle any normal driving task.

But some percentage of driving is not, well, just driving. As in any human endeavor, some of it is reasoning, as the example above illustrates. Some situations will never have been encountered before, and it will take reasoning through first principles to arrive at a good solution.

Is “open” reasoning forever out of reach for computers? Certainly not. After all, humans do it all the time, and what is going on in our brains is following the same rules as a Turing machine or the lambda calculus -- that is, what’s going on in the CPUs that power our computers.

I’m reminded of this comic from XKCD, which came out in September 2014. It is woefully out of date now; computer vision can easily recognize birds in images. At the time however, it would have taken “a research team and five years”.

Today, there isn’t much traction on abstract reasoning or “common sense”. But that’s not to say that there won’t ever be. It’s certainly possible that in seven years we look back at the blog post you’re reading now as “in another era” as we now see that XKCD comic.

As always, thank you for reading. If you have any questions or comments, please do email me or leave a comment below.

If you liked this, please subscribe or tell a friend!

Thanks,

Tom

about your subtitle: who needs a reason?