A conversation about polls

A lightly edited transcript on a back and forth about what happened with polling error this year

A few college buddies and I chatted about the polls, the forecasts, and what went so wrong with an election that was supposed to be a blowout:

C: Man, the polling autopsy in the coming months is going to be a doozy

TV: i'm a bit on the nate silver train here. most years have systematic polling error. just hard to guess which way they'll break in any given year.

TV: on average 2 points of bias in presidential polls

B: So like. Why does Nate Silver have a platform then?

TV: because you can quantify about how much bias you expect and fold that into your uncertainty. that's why he had biden so favored. his model estimated that biden could survive a polling error as large as 2016 and still win, and he did

TV: i think his title on his last post before the election as something like "biden favored, fine line between nail biter and landslide" -- so, yeah, that was pretty much right

C: I think the bigger question is their utility on a non aggregate level

C: Nate Cohn pointed out that Biden went to Iowa and Ohio, I think, during the home stretch. When those polls were way way off

TV: take the reported margin of error, double it, and if that range tells you something, then it's useful. if not, then it's not

C: 🧐

C: Explain in a way that dumb ppl like me can understand plz

TV: okay so error can come in one of two forms. bias (systematically off, think of a tight grouping in darts but off by a few inches -- when you average it the error doesn't go away) and variance (systematically on, think of darts all over the board -- when you average it the error does go away)

TV: total survey error is about 2x the reported margin of error

TV: survey methods try most of all to minimize bias. this is why you do thinks like weighting and poststratification. if some group should be 40% of your sample but they're only 20%, you upweight them by ~2x

TV: you can't really measure all the ways that bias might creep into your data (e.g. social desirability bias, partisan non response, or simply that the things that determine how people respond are unmeasured in your data)

TV: so the margin of error at the end of the day that gets reported is really only the variance component

TV: whereas there is a bias component, that on average has been about 2 points, that is almost as large as the variance component

TV: so you should take any poll, and essentially double the reported MOE

M: @Thomas Vladeck every single projected swing state had a pro trump error

M: Tom i think you can’t defend polling in an election with this much correlated error

TV: i'm not sure why not. first of all everything is relative to some counterfactual. what would you do without polling and is polling better or worse than that is a pertinent question. second, this election did have bad systematic bias, but the amount of systematic bias is not unheard-of relative to past elections [note: i contradict myself on this point later on!]. so if you knew / were expecting that, you could generate good probabilistic forecasts

N: Yeah I mean Biden won and it was one of a lot of different potential outcomes in the 538 forecast, Nate silver was right because he essentially couldn’t be provably wrong

TV: i disagree with the last clause. this is like saying weather forecasters can't be provably wrong when they say there is a 10% chance of rain. sure, not with one event. but they do it a lot, and you can see if forecasts are (a) well calibrated and (b) show "skill" (term of art), meaning their forecasts are meaningfully different for higher and lower probability events

N: But he’s not playing a game of right or wrong, right?

TV: i mean he's playing a game of "for all the forecasts for which he says X%, it should happen about X% of the time"

N: right.

W: The answer to this is because our anxious eyeballs were glued to his website, hoping for reassurance that this was all going to be over soon and hopefully in landslide fashion. I think the key takeaway is that there are factors that influence how people vote that we don’t understand, or at least have a hard time building a model in that spits out reliable information

W: I will probably follow the polls and 538 just as anxiously and religiously in 2024 as I did this year and I did 4 years ago, but I think I will try to be less confident in what they are telling me.

TV: I mean but why wouldn't you trust the probabilities from 538? Both in 2016 and this year, the outcome that happened had a meaningful probability attached to it

C: Let’s put aside 538 for a second. @Thomas Vladeck how would you evaluate the election polling this year?

C: And I don’t mean relative to some scenario in which we don’t use polling at all and just count Trump yard signs or some bullshit like that

TV: no question that in a vacuum, they were bad

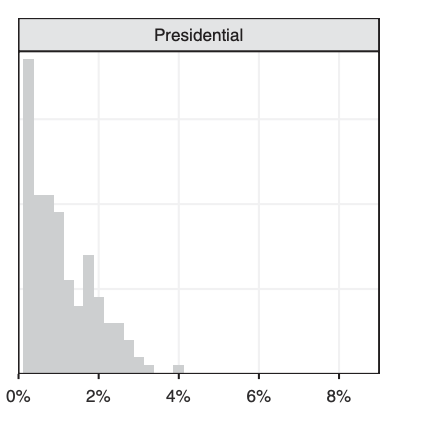

TV: if you look at historic presidential bias, it's looking like these were perhaps the worst ever: [note: here is where I contradict myself!]

TV: this is a histogram of bias in presidential polls. this election is looking to be about 5% on average

C: Well I, for one, am looking forward to smart people telling me why that happened

TV: me too!